The Empathy Trap: A Product Manager's Guide to Rational Compassion

Golden Hook & Introduction

SECTION

Atlas: Imagine this. You're in a product meeting. On one side, you have a heart-wrenching video testimonial from a single user, detailing how one missing feature is ruining their experience. It’s powerful. It’s emotional. On the other side, you have a spreadsheet with data from ten thousand users, showing that a different, less glamorous bug fix would improve overall engagement by five percent. Your gut screams to help the person in the video. But what if your gut is wrong? What if empathy, the very thing we're told to cultivate, is actually a trap?

Riley: That's a scenario that plays out, in some form, almost every week. It's the ultimate qualitative versus quantitative dilemma.

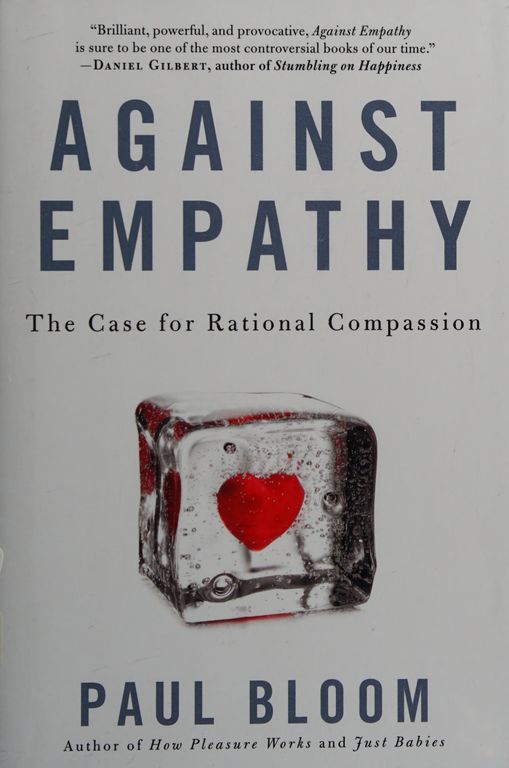

Atlas: And that's the provocative question at the heart of Paul Bloom's book, 'Against Empathy.' We're joined today by Riley, a product manager who lives this tension daily. So, Riley, that scenario I just painted… does that sound familiar?

Riley: Absolutely. It's the core of so many strategic debates. A single, vivid story has an outsized emotional weight that can dangerously skew planning. You have one person's intense pain versus a small amount of friction for thousands. The emotional pull is almost always toward the one, even if the data points toward the thousands.

Atlas: Exactly. And that's what we're digging into today. We're going to tackle this from two perspectives, based on Bloom's work. First, we'll expose the hidden dangers of empathy, what he calls the 'spotlight problem.' Then, we'll build a better way forward, exploring the powerful alternative of 'rational compassion' as a practical tool for making smarter, more impactful choices.

Deep Dive into Core Topic 1: The Spotlight Problem

SECTION

Atlas: So let's start with that trap. The 'spotlight problem.' Bloom argues that empathy—the act of feeling what you think someone else is feeling—isn't a floodlight that illuminates everyone. It's a tight, narrow spotlight. It picks out one person and makes them hyper-visible, while leaving thousands, or millions, in total darkness.

Riley: And in product management, that spotlight is often aimed by whoever is loudest or tells the most compelling story.

Atlas: Precisely. To illustrate this, Bloom uses a famous, tragic story that many people might remember. The story of Baby Jessica. Let's set the scene. It's 1987, in Midland, Texas. An 18-month-old toddler named Jessica McClure is playing in her aunt's backyard and falls into an abandoned well. It's only eight inches wide, but it's 22 feet deep.

Riley: Oh, I remember hearing about this. It was a massive news event.

Atlas: It was news event. For 58 straight hours, the world was glued to their televisions. CNN was there, broadcasting live. Rescuers were working around the clock, drilling a parallel shaft, trying to figure out how to get to her. You could hear her singing nursery rhymes from the well. The entire nation, the entire world, was holding its breath, feeling the parents' terror, feeling for this one little girl trapped in the dark.

Riley: It's the perfect recipe for an empathetic response. A single, identifiable, innocent victim. You can picture her. You can feel the fear.

Atlas: You can. And when she was finally rescued, the country erupted in joy. But here's the crucial part Bloom focuses on: the aftermath. Donations poured in. People sent money, gifts, and well-wishes. A trust fund was set up for her that grew to over 700,000 dollars. The world's empathy was converted into a massive amount of resources for one single child.

Riley: Which, on its own, feels like a good thing. A demonstration of human kindness.

Atlas: It does. But now, pull the camera back. During those same 58 hours that the world was transfixed by Baby Jessica, a statistically certain number of children died around the world. Thousands of them. They died from preventable things like malaria, malnutrition, and diarrhea. There were no cameras pointed at them. There was no single face to latch onto, no name, no nursery rhymes being sung. Just a number on a page in a UNICEF report.

Riley: The statistical victims.

Atlas: The statistical victims. The world's empathy, and its money, flowed to the one identifiable girl in the well, not the thousands of anonymous children in the abstract. That is the spotlight problem. Empathy is biased. It's drawn to the specific, the personal, the story. It is innumerate—it can't distinguish between one and a thousand.

Riley: That is a brutal and perfect analogy for product prioritization. Baby Jessica is the 'squeaky wheel' user. The one who writes a three-page email to the CEO, who posts a viral Twitter thread with screenshots, who gets everyone's attention. They are the identifiable victim of a missing feature or a bug.

Atlas: And the team feels their pain. They want to help.

Riley: Exactly. Meanwhile, the thousands of anonymous users who are suffering from a slow-loading checkout page or a confusing user interface… they are the statistical victims. They don't write long emails. They just… leave. They churn. Their frustration is silent, but its impact on the business is massive. Empathy, as Bloom defines it, can't 'feel' a 2% increase in churn rate. But that 2% could represent thousands of users.

Atlas: So you're saying empathy makes you solve the loudest problem, not necessarily the biggest one.

Riley: Yes. It biases you toward the anecdotal and away from the analytical. The real challenge for a product team isn't just to listen to stories. It's to build systems that give a voice to that silent, statistical majority. To find the 'why' behind the numbers and act on that, even when there isn't a single, tear-jerking story attached.

Deep Dive into Core Topic 2: The Blueprint for Better Decisions

SECTION

Atlas: I love that framing. 'Building systems that give a voice to the silent majority.' That is the perfect bridge to Bloom's solution. Because if empathy is the flawed spotlight, we need a floodlight. He calls it 'rational compassion.'

Riley: Okay, so let's define that. Because 'rational compassion' could sound cold or robotic to some people. Like you're just looking at a spreadsheet of human problems.

Atlas: That's the key distinction he makes. It's not about being unfeeling or cold. The 'compassion' part is critical. It means you still care. You genuinely want to help, you want others to flourish, you want to alleviate suffering. The goal is the same as empathy. But the 'rational' part is about how you achieve that goal. You use your head, not just your gut. You use evidence and reason to figure out where your resources—your time, your money, your engineering hours—can do the good for the people.

Riley: So it's about effectiveness. It's about maximizing the positive outcome.

Atlas: Precisely. The classic example is the effective altruism movement, which is built on this principle. Let's say you have a hundred dollars to donate. Your empathetic response, maybe after seeing a sad commercial, is to give it to a local animal shelter to help one specific, cute-looking puppy. You get a warm feeling. You feel like you did a good thing.

Riley: And you did do a good thing. You helped a puppy.

Atlas: You did. But a rationally compassionate approach would ask a different question. It would ask: where can this one hundred dollars have the maximum possible impact on well-being? So you might do some research and discover an organization like the Against Malaria Foundation. And you find out that for that same hundred dollars, you could provide dozens of insecticide-treated bed nets to families in a region where malaria is rampant. And that, statistically, will save multiple lives.

Riley: So the choice is between feeling good because you helped one puppy you can see, versus knowing you saved several human lives you will never meet.

Atlas: Exactly. The goal is the same—do good. But the method is driven by data and a cost-benefit analysis of well-being. It's a floodlight. It tries to illuminate the whole landscape of need and find the most effective point of intervention.

Riley: You know, it's fascinating. This is the language of product management, just with a different vocabulary. We don't call it 'rational compassion,' we call it 'maximizing return on investment' or 'optimizing for a key performance indicator.'

Atlas: Unpack that. How does it map so directly?

Riley: Well, when we decide to build Feature A over Feature B, we're making a resource allocation choice. Our engineering time is finite, just like that hundred dollars. An empathy-driven approach might be to build the feature a very passionate, high-profile client is begging for. It feels good to make them happy. But a rational approach, our standard process, is to analyze the data. We'd ask: how many users will Feature A impact? By how much will it improve our core metric, like user retention or conversion rate? And what about Feature B?

Atlas: You run the numbers.

Riley: We run the numbers. We might even run an A/B test, where we give a new feature to a small group of users and measure its effect against a control group. We are literally using a systematic, rational process to find the most effective solution for the user base. What Bloom has done is give a philosophical and ethical framework to what many people might dismiss as a cold, corporate process. He's saying that making decisions based on data and scalable impact isn't un-caring. It's actually a more advanced, more ethical form of caring.

Atlas: It's caring about the whole community, not just the one person in your line of sight.

Riley: Right. It's caring enough to do the hard work of analysis to ensure your help is actually effective, not just emotionally satisfying.

Synthesis & Takeaways

SECTION

Atlas: So, when you put it all together, the argument isn't really 'against' caring. It's against a specific, biased, and often counterproductive tool for caring. It's about upgrading your moral toolkit.

Riley: I think that's the perfect way to put it. Empathy might be the spark. It's the emotional signal that makes us aware of a problem and gives us the 'why'—we want to help our users, we want them to succeed. But it's a terrible guide for the 'how.' Rational compassion is the framework for the 'how.' It's the process you use to design the most effective, scalable, and equitable solution.

Atlas: You need the spark and the engine. The feeling and the function.

Riley: Exactly. One without the other is incomplete. All feeling with no function leads to biased, inefficient decisions like the Baby Jessica effect. All function with no feeling, no 'why,' can become aimless optimization. You need to know you're trying to raise that metric—to improve people's lives or make their work easier.

Atlas: So for all the other product managers, analysts, and decision-makers listening, what's the one practical thing they can take away from this conversation? How do they start implementing rational compassion tomorrow?

Riley: I think it's about building a conscious check into your process. It's simple, but powerful. Next time you're in a meeting debating a feature, a fix, or any strategic decision, take a moment and force yourself and your team to answer two separate questions. First: 'How emotionally compelling is the story for this option?' Acknowledge the empathetic pull. Give it a name.

Atlas: The 'Baby Jessica' factor.

Riley: The 'Baby Jessica' factor, exactly. Then, ask the second question: 'Setting the story aside, what is the data-driven, scalable impact of this decision on our entire user base?' By explicitly separating those two lines of thinking, you can see when the emotional tail is wagging the strategic dog. It allows you to honor the human element without letting it hijack the logic. It's a simple habit that can break the empathy trap and lead to much stronger, more defensible, and ultimately, more compassionate decisions.

Atlas: A powerful framework. Separate the feeling from the function to make better choices for everyone. Riley, thank you for bringing such a sharp, strategic lens to this. It was fantastic.

Riley: My pleasure. It's a topic that hits very close to home.